CliniciansaccustomedtotreatingX-raysasobjectiveanchorsinuncertaincasesaremeetingagenerativeAIfakethatlookseverybitrealonthefirstpassandstilldefieseasyverificationonthesecond. A peer-reviewed study found that text-to-image models can fabricate chest radiographs so anatomically plausible that radiologists flagged the forgeries correctly only 41% of the time when not warned of manipulation. Even after an alert, accuracy rose to roughly 75%, leaving a troubling gap. Automated screeners did not fare better; in several tests, the very model family that generated the images failed to tell real from synthetic. This collision of realism and ambiguity undermines long-standing habits: trusting pixel patterns, correlating with short clinical notes, and moving quickly in high-volume workflows. The stakes extend beyond misreads. Synthetic pathology can enable insurance scams, sway legal disputes, or poison training corpora, degrading future models in a self-reinforcing loop that blurs the line between signal and spoof.

How Deepfakes Break Clinical and Technical Assumptions

What makes these images so compelling is not just high resolution but coherent anatomy under subtle constraints: rib spacing tracks patient size, cardiomediastinal contours stay proportionate, and text overlays, if any, mimic department styles. Simple artifact-spotting no longer suffices. Moreover, legacy protections in imaging pipelines were not built for this threat. DICOM headers can be edited, PACS archives mirror tampered files, and audit logs often track access rather than content integrity. Detector models trained on obvious compositing artifacts stumble on prompt-driven synthesis that bakes in realistic noise and equipment signatures. The result is a dual failure mode: humans over-trust context, while algorithms over-fit to stale tells. In triage settings, a forged pneumothorax can trigger unnecessary interventions; in oncology, a phantom lesion can reroute care plans. The cost is not hypothetical—it materializes wherever verification lags generation.

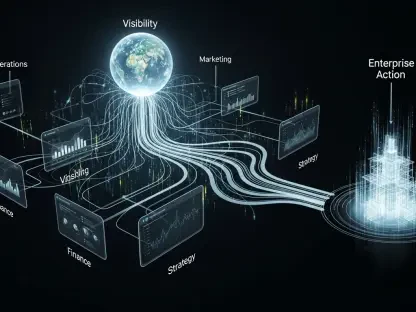

Building Defenses: From Capture to Courtroom

Mitigation now hinges on layered controls that start at acquisition and persist to adjudication. At the scanner, cryptographic signing of pixel data and headers—using Ed25519 with SHA-256—can anchor provenance, with keys protected in hardware security modules and enforced via secure boot on modalities. Hospitals are piloting C2PA-based manifests embedded in DICOM objects to bind images to device IDs, timestamps, and chain-of-custody hashes; verification can run inside PACS viewers with fail-closed policies. In transit, mTLS and certificate pinning reduce interception risk, while immutable logs streamed to a SIEM preserve evidence. On the detection front, ensembles that fuse forensic cues (upsampling traces, PRNU inconsistencies) with clinical context (HL7 FHIR orders, vitals, and prior exams) perform better than image-only classifiers. Training should include drills where radiologists reconcile pixels with chart data, device metadata, and acquisition notes. Regulators, meanwhile, can mandate watermark support akin to SynthID-style signals, require procurement of provenance-capable devices in the next buying cycle, and set liability frameworks that incentivized prompt disclosure when integrity checks fail. The most pragmatic next steps were concrete: turn on signing where vendors support it, pilot C2PA manifests on one modality line, route unsigned studies to secondary review, and benchmark detectors quarterly against a living dataset that includes the latest model families.