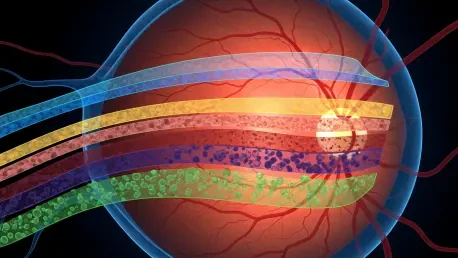

Retinal care faced a bottleneck where life-altering decisions hinged on tools that flattened inherently three-dimensional anatomy into slices and guessed across gaps clinicians could not see in time. That tension set the stage for OCTCube-M, a three-dimensional, multi-modal foundation model that treats optical coherence tomography (OCT) as a native 3D signal while aligning it with infrared retinal imaging (IR) and en face (EF) views. Reported by Liu and colleagues in Nature Biomedical Engineering, the work proposed that preserving volumetric continuity and learning shared cross-modal representations could sharpen both diagnosis and prognosis across age-related macular degeneration (AMD), diabetic retinopathy, retinal vein occlusion, and other common threats to vision. Rather than bolting together task-specific models, the approach built a single representational backbone that traveled across devices, patient cohorts, and clinical tasks. In practice, that meant a model that could detect a microstructural lesion in 3D OCT, corroborate it with a vascular or pigment cue in IR, and then reason over EF patterns to forecast progression. The result read as a step-change: a unified system designed for real-world heterogeneity, not just the curated consistency of a single scanner or trial.

Why Retina Care Needs a 3D, Multi-Modal Shift

The most widely used ophthalmic imaging modality, OCT, resolves retinal layers at micrometer scale, yet much of its computational handling still reduces volumes into independent B-scans or thin slabs, severing spatial continuity and weakening sensitivity to context-bound abnormalities. Tiny hyperreflective foci, drusen morphology, intraretinal cysts, and subtle ellipsoid zone disruptions often emerge across neighboring slices; ignoring that neighborhood sacrifices clinical signal. At the same time, IR highlights vasculature, melanin-rich structures, and surface changes that may be faint or ambiguous on a single OCT slice, while EF condenses macular patterns that can index disease stage or activity. Clinicians naturally synthesize these cues during interpretation. Algorithms that fail to fuse them risk overfitting to artifacts, missing early disease, and performing inconsistently when imaging protocols vary between visits or across sites.

Building on this tension, the argument for a 3D-native, multi-modal paradigm becomes operational rather than academic. Early detection in AMD demands recognizing the geometry and internal reflectivity of drusen in relation to the retinal pigment epithelium and photoreceptor layers—relationships that live in 3D. Evaluating diabetic macular edema hinges on differentiating cystoid spaces from shadowing artifacts and co-locating fluid with vessel patterns, a task that benefits from OCT-IR alignment. Planning anti-VEGF therapy or monitoring geographic atrophy progression requires longitudinal consistency that exposes subtle change, not just cross-sectional features. Fragmented pipelines have struggled to deliver this cohesion, especially under clinic-grade variability in scan density, field of view, motion artifacts, and device calibration. A foundation model trained across modalities and devices offers a path toward durable performance where it matters most: routine care.

The OCTCube-M Family at a Glance

OCTCube-M consists of three tiers tailored to distinct clinical and technical needs, scaling from uni-modal to tri-modal capability while maintaining a shared design principle: learn volumetric structure first, then align complementary signals at scale. The base model, OCTCube, trained on 26,605 OCT volumes—about 1.62 million axial slices—leverages 3D-native architectures to preserve continuity across B-scans and capture multi-scale microarchitecture. Rather than treat each slice as a separate image, OCTCube aggregates context across depth and lateral extents, improving sensitivity to localized lesions that only stand out when seen against their neighborhood. This baseline alone repositions volumetric understanding as the default, replacing piecemeal inference with integrated 3D reasoning that maps more closely to how retinal pathology actually appears.

The bi-modal variant, OCTCube-IR, extends this foundation using 26,685 paired OCT–IR datasets to link structural clarity with vascular and pigmentation cues. By aligning volumetric OCT features with IR’s surface-sensitive signatures, it enables cross-modal retrieval and data completion: when IR is missing or noisy, OCT can anchor interpretation; when OCT quality dips due to motion or segmentation errors, IR can stabilize the read. At the top of the stack, OCTCube-EF integrates more than 4 million OCT slices with over 400,000 EF images sourced from six multicenter clinical trials across 23 countries, targeting prognostic tasks that demand broad generalization. A prime example is forecasting the growth of geographic atrophy in advanced AMD, where EF macular patterns and OCT-resolved layer integrity jointly encode risk. Together, the family provides modular routes to deployment: start with 3D OCT for classification, add IR for resilience and search, and incorporate EF for longitudinal forecasts.

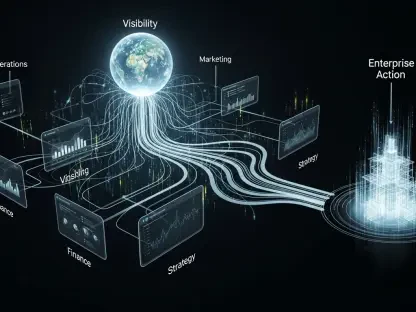

COEP: The Contrastive Engine Under the Hood

At the core of this model family sits COEP, a contrastive learning framework that creates a shared embedding across 3D OCT volumes and 2D IR and EF images. The method pairs matched samples—an OCT volume with its corresponding IR or EF view—and contrasts them against unmatched pairs, encouraging the network to retain modality-specific strengths while enforcing semantic alignment. In practical terms, a lesion that appears as a hyporeflective cyst on OCT and as a localized darkening on IR is pulled together in the latent space, whereas unrelated images are pushed apart. This simple learning rule, scaled across millions of slices and hundreds of thousands of paired images, yields features that travel across scanners, vendors, and acquisition protocols without requiring handcrafted registration or brittle rules.

Several capabilities flow naturally from this alignment. Cross-modal retrieval becomes possible: submit an IR image and retrieve the closest OCT volumes that share pathologic signatures, or start from OCT and locate EF images that match surface-level patterns. Transfer learning improves, since the shared space serves as a neutral zone where downstream tasks can fine-tune with modest labeled data. Most importantly, interpretability strengthens. When OCT-, IR-, and EF-derived features co-localize in embedding space, clinicians can trace predictions back to known anatomic and pathophysiologic correlates. Rather than black-box saliency on a single slice, the system can surface tri-modal concordance, flagging when modalities agree or disagree and offering uncertainty cues where alignment breaks down.

Training at Scale, Built for Diversity

Performance at the point of care often falters not for lack of algorithmic ingenuity but because models overfit to narrow datasets acquired on a single device under ideal conditions. OCTCube-M confronts that risk by pretraining on one of the largest and most heterogeneous retinal repositories assembled: tens of thousands of OCT volumes, millions of 2D slices, and hundreds of thousands of EF images spanning multiple diagnoses and clinical environments. This breadth counters device bias—signal differences caused by proprietary optics, noise profiles, or segmentation idiosyncrasies—and cushions the model against cohort-specific quirks such as demographic prevalence or site-level workflow patterns. The result is not just accuracy on a benchmark but stability under noise, missing modalities, and protocol shifts common in busy clinics.

Moreover, scale here is functional, not decorative. A 3D-native encoder must learn volumetric motifs that recur across conditions—layer undulations, outer retinal disruptions, intraretinal fluid geometries—and it must do so while reconciling these features with 2D projections that compress structure into surface cues. COEP provides the glue, but only broad exposure ensures that alignment covers edge cases rather than just canonical presentations. Exposure to six multicenter clinical trials across 23 countries in the tri-modal variant is especially notable for prognostic modeling. Longitudinal outcomes are where domain shift often hurts most; patients move, devices change midcourse, and site practices evolve. Pretraining on diverse trials simulates these realities, producing a backbone more likely to hold its footing when conditions change between visits.

What the Numbers Say

Across eight major retinal diseases, OCTCube posted state-of-the-art diagnostic accuracy, with notable gains in sensitivity for small, spatially localized lesions and inter-layer irregularities that slice-based models tend to dilute. The advantage derived from true 3D context: edema pockets that straddle slices, early outer retinal atrophy patterns that require layer continuity, and microstructural cues that become visible only when aggregated across adjacent B-scans. These enhancements did not come at the cost of specificity. By grounding decisions in volumetric neighborhoods rather than isolated frames, the model better distinguished artifacts such as shadowing or speckle from true pathology, which historically confounded 2D pipelines.

Equally consequential was generalization. When evaluated on unseen devices, institutions, and patient cohorts, the models maintained robust performance, suggesting resilience to common sources of drift such as different scan densities or distinct noise characteristics. OCTCube-IR demonstrated retrieval and inference stability when one modality was degraded or absent, retrieving nearest neighbors across modalities to inform interpretation. OCTCube-EF’s prognostic forecasts of geographic atrophy growth captured longitudinal risk trajectories, supporting stratification that could influence monitoring intervals and the timing of interventions in advanced AMD. Such breadth—classification, retrieval, and prognosis—showed that a single foundation model could extend beyond static labels into the longitudinal questions that shape patient care.

What Each Variant Adds

Each member of the OCTCube-M family addresses a distinct pressure point in retinal care. OCTCube, the uni-modal backbone, focuses on volumetric structural understanding and broad diagnostic tasks. It is the workhorse for clinics where OCT is routine, immediately improving detection of subtle microarchitecture while slotting into existing workflows. OCTCube-IR then adds a bi-modal channel that reflects how clinicians already think: correlate structural slices with surface and vascular cues. In practice, this addition reduced brittleness. If an OCT volume suffered motion artifacts or segmentation glitches, the aligned IR view anchored interpretation. If the IR image was noisy or missing due to protocol differences, OCT carried the load, with the shared embedding providing a consistent representation for downstream classifiers.

OCTCube-EF, the tri-modal expansion, pivoted from classification resilience to prognostic power. By integrating EF—which naturally summarizes macular patterns related to atrophy borders, foveal sparing, and lesion topology—with volumetric OCT structure, the model forecasted geographic atrophy growth rates trained on data drawn from six multicenter clinical trials across 23 countries. This shift matters because therapeutic development and clinical management hinge on predicting change, not just labeling the present. Accurate forecasts can inform individualized follow-up schedules, guide intervention timing, and support trial enrichment by identifying patients likely to progress. OCTCube-EF’s scale of pretraining and its contrastive alignment offered a route to those forecasts without bespoke, trial-specific models for every device and site.

How This Could Change Clinical Workflows

Adopting a 3D-native, multi-modal foundation model refactors several parts of the clinic day. For triage, higher sensitivity to fine, localized lesions improves routing decisions, flagging cases that warrant urgent attention and sparing unnecessary referrals. During interpretation, cross-modal retrieval operates like a diagnostic copilot: present an IR image from today’s visit and fetch analogous OCT volumes from past encounters or from a reference library of well-annotated cases. That capability can steady decision-making when image quality varies or protocols shift because of throughput demands. Moreover, volumetric reasoning cuts down on ambiguous reads by leveraging neighborhood context instead of over-relying on a single slice or slab.

Clinical trial workflows also stand to benefit. Trial endpoints rooted in tri-modal biomarkers—layer integrity on OCT, macular patterning on EF, and vascular cues on IR—promise more objective and sensitive measures of progression. This, in turn, could shorten study durations or reduce sample sizes for certain questions, by enriching cohorts with patients most likely to exhibit measurable change. In AMD, where geographic atrophy management relies on careful tracking of lesion borders and foveal involvement, OCTCube-EF’s forecasts could help align visit schedules with expected risk windows, optimizing both patient burden and clinic capacity. All of this points to a steadying influence in environments marked by variability: busy retina clinics, multi-center networks, and longitudinal care where consistency is notoriously hard to maintain.

From Bench to Bedside: Practical Hurdles

Translation hinges on more than metrics. Interoperability and standards determine whether a model can meet messy reality. Consistent handling of DICOM and proprietary formats, device-specific calibration, and metadata integrity are prerequisites for safe deployment. Pre- and post-processing pipelines must be deterministic and transferable, with versioning that enables auditability after software updates. These nuts-and-bolts details decide whether an otherwise excellent model produces repeatable outputs when the same eye is scanned on different machines or when a firmware patch changes noise characteristics. Without that scaffolding, performance can drift silently, undermining trust before anyone notices.

Privacy and security remain unavoidable constraints. Training at scale invites data governance challenges; deployment across hospital networks requires controls that satisfy health data regulations without throttling throughput. Federated learning and on-premises inference can mitigate risk, but they demand engineering for latency, encryption, and failover. Human factors also matter. Clinician-facing interfaces should surface aligned cross-modal features, show uncertainty when modalities disagree, and explain predictions through anatomy-aware overlays and textual rationales linked to known pathophysiology. Finally, governance completes the picture: prospective, multi-site evaluations; post-market surveillance to catch bias or drift; and clear escalation paths when the model signals out-of-distribution inputs. These are the levers that convert promising research into durable clinical infrastructure.

Why It Matters Beyond Ophthalmology

The principles demonstrated here—3D-native modeling combined with contrastive multi-modal alignment—carry obvious relevance to other specialties that juggle heterogeneous imaging. Oncology could align PET’s metabolic signals with CT’s anatomy and MRI’s soft-tissue contrast to stage tumors and predict response, using a shared embedding to reconcile modalities collected on different scanners and schedules. Cardiology could tie echocardiography’s real-time dynamics to cardiac MRI’s structural fidelity, improving phenotyping in cardiomyopathies where tissue characterization and motion patterns both matter. Neurology could fuse 3D MRI with diffusion and functional imaging to anticipate progression in neurodegeneration, where longitudinal subtlety outpaces human perception.

Just as important is the foundation-model strategy. Pretraining across large, diverse, multi-institutional datasets provides a bedrock of generalization that makes downstream fine-tuning economical and robust. Contrastive alignment like COEP then stitches modalities into a single language, enabling cross-modal retrieval, resilience to missing data, and interpretable reasoning that traces back to clinical correlates. The payoff looks similar across domains: fewer brittle, siloed pipelines; more durable performance under device change; and a shift from static classification toward prognosis and longitudinal guidance. In effect, OCTCube-M illustrates a blueprint for clinical AI that respects the physics and practice of imaging rather than forcing them into uniform molds.

Turning Capability Into Care: What Should Happen Next

Real-world impact required steps that were concrete, staged, and measurable. Health systems prioritized pilot deployments in retina clinics that already archived OCT, IR, and EF, using a shadow mode to compare model outputs with clinician reads over several months. Imaging vendors exposed stable APIs for volume ingestion and metadata, enabling on-device pre-processing and deterministic reconstruction that preserved cross-site consistency. Informatics teams implemented audit trails and version locking, so each inference could be traced to the exact model hash and pre-processing pipeline. These actions formed a baseline for reliability before clinical decisions depended on the system.

Clinicians and researchers then focused on three practical moves. First, assemble reference libraries of adjudicated cases spanning devices and diagnoses, powering cross-modal retrieval and offering a safety net when image quality fluctuated. Second, define governance triggers—such as shifts in calibration curves or sudden changes in uncertainty distributions—that automatically initiated reviews or temporary rollbacks. Third, tailor training extensions to local needs: add fundus autofluorescence for atrophy tracking, or fluorescein angiography for ischemia mapping, without retraining from scratch by leveraging the modular structure of OCTCube-M. Taken together, these steps translated a promising foundation model into dependable clinical support, turning 3D-native, multi-modal intelligence into day-to-day gains in accuracy, efficiency, and patient-specific planning.