Modern corporate environments are currently witnessing a silent revolution where the traditional boundary between professional performance and private mental health is rapidly dissolving into a stream of continuous data. As organizations grapple with staggering economic figures, such as the estimated $1 trillion lost annually in global productivity due to depression and anxiety, they are increasingly turning to automated solutions to safeguard their bottom lines. These sophisticated well-being applications, often integrated into standard office software or provided as mandatory employee benefits, promise to identify burnout before it results in a leave of absence. However, while the marketing materials focus on compassion and early intervention, the underlying reality is far more complex and intrusive. These tools operate by harvesting thousands of data points from an individual’s daily interactions, effectively turning the workplace into a psychological laboratory where every keystroke and vocal inflection is scrutinized. By framing surveillance as a health benefit, institutions have managed to bypass many of the traditional privacy protections that usually govern the monitoring of employees in the 21st century.

The Mechanics of Digital Behavioral Analysis

Automated Psychological Profiling: Analyzing Hidden Signals

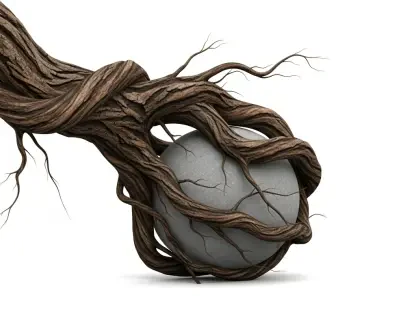

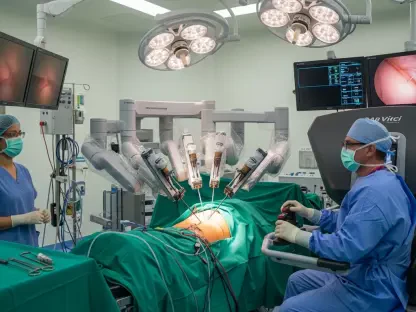

The technical architecture of these well-being systems relies on the collection and interpretation of what researchers call behavioral traces. Unlike traditional therapy, which depends on a patient’s self-reporting, these AI-driven tools monitor subtle changes in how an individual interacts with their digital environment. For instance, voice analysis algorithms evaluate recordings from video conferences to detect shifts in pitch, rhythm, and volume that might correlate with high stress levels. Similarly, linguistic processing tools scan emails and chat messages for emotional tone and word choice, looking for patterns that suggest withdrawal or agitation. Beyond communication, the software tracks metadata from smartphones, such as changes in sleep schedules or the frequency of social interactions, to build a comprehensive probability estimate of a person’s psychological state. This constant monitoring creates a digital shadow of the user, where the algorithm attempts to “read” the mind of the worker through the artifacts of their digital life.

The Context Gap: Statistical Probabilities Versus Human Reality

A significant risk inherent in these diagnostic tools is the profound disconnect between a statistical signal and the actual lived experience of a human being. Algorithms are designed to identify deviations from a calculated “normal” baseline, but they frequently lack the necessary context to interpret why those deviations occur. For example, a decrease in speech velocity might be flagged by a system as a primary indicator of clinical depression, yet the reality could be that the individual is simply exhausted after a long shift or is communicating in a second language. This lack of nuance is particularly dangerous for neurodivergent individuals or those from diverse cultural backgrounds whose natural communication styles may not align with the Western-centric datasets used to train these models. When a machine misinterprets a personality trait or a temporary environmental factor as a mental health crisis, the consequences can be life-altering, as the resulting “data” begins to define the individual’s professional identity without their knowledge or input.

Institutional Implications and Ethical Boundaries

From Health Support to High-Stakes Monitoring

The transition of psychological states into quantifiable data points has fundamentally altered the power dynamic between institutions and individuals. Once a well-being app generates a report on an employee’s mental stability, that information rarely stays confined to a healthcare context; instead, it enters a gray area where it can influence critical institutional decisions. There is a growing concern that these “early-warning” labels will be used by human resources departments to determine who is considered reliable for a promotion or who might be the first to go during a corporate restructuring. This effectively moves mental health monitoring from the private, protected sphere of a doctor’s office into a form of high-stakes surveillance. In 2026, the potential for this data to be shared with insurance providers or future employers creates a permanent digital record of a person’s perceived emotional vulnerability, often without the subject ever having the opportunity to contest the algorithm’s findings or provide context for their behavior.

Demanding Accountability: The Path Toward Transparent Systems

Addressing the ethical challenges posed by automated well-being tools required a significant shift in how technology is implemented within professional and academic settings. In the past, the rapid deployment of these systems outpaced the development of necessary regulatory frameworks, leaving users vulnerable to unannounced monitoring. To rectify this, the industry must prioritize a mandate of absolute transparency, where institutions are legally required to inform users whenever their behavioral data is being analyzed for psychological insights. Furthermore, these tools should undergo rigorous, independent audits to ensure they do not harbor biases against specific demographics or communication styles. Moving forward, the most effective path involves re-centering the human element by ensuring that AI serves only as a preliminary aid for qualified healthcare professionals rather than a standalone judge of human character. By establishing clear boundaries and demanding empirical validation for these claims, society was able to reclaim the right to psychological privacy while still leveraging technology to support genuine mental health initiatives.