Chloe, thanks for having me. I’ve spent my career building robotics and IoT systems that survive chaos—ambulances, rural clinics with flickering power, and ORs where seconds matter. Ultrasound-on-a-chip is a watershed moment because it collapses three barriers at once: cost, portability, and usability. In this conversation we’ll unpack how chip-based probes with 9,000 tiny sensors, FDA-cleared AI for gestational age, and third‑party apps for diagnostic‑quality cardiac imaging can shift care from centralized carts that run $30,000 to $200,000 to $4,000 handhelds with memberships. We’ll talk frontline workflow redesign, validation across operators, building national-scale programs where two thirds of the world lacks imaging, and the hard economics—like a $15 million upfront plus $10 million annually for generative AI—needed to turn strong revenue into sustainable impact.

You’ve emphasized making ultrasound low cost, mobile, and easy to use. What concrete problems in everyday care are you targeting first, and how will you measure success in months, not years? Can you share specific deployment timelines, training steps, and adoption metrics you expect?

I’m targeting three day-one problems: ruling in or out fluid in the lungs, confirming early intrauterine pregnancy, and quickly screening cardiac function when shortness of breath walks in. Those are the scenarios where waiting three hours, like the ER story we’ve all lived, creates anxiety and unnecessary costs. In the first 90 days, I focus on fast setup—devices ordered, accounts provisioned, and brief, role-based training sessions that lean on AI guidance cleared for gestational age and a third‑party tool for diagnostic‑quality cardiac scans. We measure success weekly by scans per device, percentage of visits with a scan within minutes of arrival, and how often POCUS avoids downstream imaging; because the hardware is ~$4,000 versus $30,000–$200,000 carts, you can see changes within a single quarter even before enterprise integrations are finished.

Many patients wait hours in emergency settings just to access imaging. How would you redesign front-line workflows so clinicians scan at the bedside within minutes? What staffing, credentialing, and quality safeguards are needed, and how would you track false positives and negatives over time?

I move the probe upstream—triage nurse, rapid provider assessment, and a designated “POCUS lead” who floats to coach. Credentialing should leverage structured pathways, pairing basic training with AI guidance so new users don’t fly blind. Quality comes from a feedback loop: every scan saved to PACS, peer review of a sample, and correlation against downstream imaging when available, with flags for suspected false positives and negatives. Over time, we compare these trends by shift and location, and because the same single chip can cover lung, cardiac, and abdominal presets, we remove friction that comes from swapping physical transducers, making “scan in minutes” a habit rather than a heroic act.

Your probe uses a single semiconductor chip with thousands of sensors to cover multiple frequencies. How does this translate into real-world image quality across lung, cardiac, and abdominal use cases? What trade-offs remain versus cart systems, and how do you validate performance across operators?

The 9,000 tiny sensors let you drive high frequencies for pleural line detail and drop lower to penetrate the heart or abdomen, all without swapping probes. In lung, that agility makes A- and B-line assessment crisp; in cardiac, you get enough depth and frame stability for the third‑party diagnostic-quality workflow to lock in views. Abdominal scanning benefits from rapid preset switching—one probe, multiple targets—which matters in a cramped bay. Trade-offs remain in extreme niche imaging where premium carts can justify $200,000 price tags, but we validate by standardizing protocols, archiving anonymized clips, and running cross-operator reviews so technique, not technology, becomes the main source of variation.

Traditional systems can cost tens to hundreds of thousands of dollars, while handhelds are near a few thousand plus subscriptions. How should health systems model total cost of ownership, including training, AI add-ons, and device lifecycle? What payback periods have you seen in primary care, ED, and ICU settings?

Start with capital: $4,000 per unit scales very differently than $30,000–$40,000 carts. Layer in memberships for advanced modes and AI—like the gestational age tool and the cardiac diagnostic assistant—and allocate training time as a program cost, not an afterthought. Model avoided downstream tests, faster disposition, and reduced transfers; even a handful of prevented referrals can outpace fees in a quarter. I avoid quoting new payback numbers here, but the cost delta is so large that, with steady utilization, many sites see meaningful financial impact well inside a year.

With more than 150,000 devices reportedly in the field, what usage patterns stand out by care setting? Where do scans per device surge, where do they stall, and what have you learned about onboarding, super-user development, and sustaining utilization after six and twelve months?

Scale teaches you that environment dictates behavior. Major hospital systems tend to spike early when a super-user champions bedside lung and cardiac presets; rural clinics climb steadily as prenatal and abdominal scans become woven into routine visits. Utilization stalls when onboarding stops at the ribbon cutting—without a named super-user and scheduled refreshers, the probe drifts into a drawer. The best six- and twelve‑month curves pair peer coaching with clear goals, archiving workflows, and recognition—once clinicians see how a $4,000 device spares a patient a three‑hour wait, momentum builds.

How do you design for extreme environments like rural clinics, conflict zones, or spaceflight? What have field deployments taught you about durability, battery life, infection control, and offline workflows? Which design changes came directly from those lessons?

You design for dust, drops, and dead outlets. Deployments with medics in Ukraine and even on the International Space Station remind you that power isn’t guaranteed, so we optimize charge cycles and make offline modes first-class citizens, not backups. Infection control drives sealed surfaces and quick-swap covers; rural clinics push for simple, glove-friendly controls. Direct changes included hardening ports, extending battery life targets, and prioritizing on-device presets so that whether you’re at a roadside or microgravity, lung-to-cardiac switching takes a thumb press, not a network connection.

Two thirds of the world lacks access to imaging. Which countries or care tiers are highest priority, and how will you tackle power, connectivity, and supply chain barriers? What financing models, partnerships, and training pipelines can scale beyond pilots into national programs?

Primary care and maternal–child health tiers are my first stop—midwives, community clinics, and district hospitals. Power and connectivity get bridged with battery‑forward kits and offline-first software; supply chains leverage regional hubs so a probe doesn’t spend months in customs. Financing often blends public procurement with philanthropy and enterprise discounts; partnerships with ministries and NGOs turn 100‑site pilots into national playbooks. Training pipelines pair local super-users with AI guidance—like the FDA-cleared gestational age support—so competency grows even when trainers can’t be on-site every week.

You distributed hundreds of devices to clinics in East Africa. What happened after the ribbon-cutting—who trained whom, how often were scans performed, and what conditions were most impacted? What retention, maintenance, and clinical-outcome data will guide the next expansion?

In 2022, 500 devices went to health centers in Kenya, and the story didn’t end with photos and a plaque. Local clinicians trained peers, anchored by structured modules and AI support for prenatal assessments; lung and basic cardiac scans followed as confidence grew. Maintenance relied on simple swap programs and spare cables, because a broken connector shouldn’t sideline a clinic for a season. For expansion, we look at retention of trained users, uptime, and condition-specific outcomes—prenatal timing with the new gestational age tool is a prime candidate to quantify impact at scale.

Competitors include several major handheld platforms. Where do you see meaningful differentiation—in image quality, AI guidance, price, ecosystem, or regulatory coverage? What objective benchmarks or head-to-head studies would you point to, and what gaps remain to close?

Differentiation starts with the single probe that shifts frequencies via chip control, covers multiple use cases, and comes in at about $4,000—versus replacing or swapping multiple transducers. AI is another wedge: FDA clearance for gestational age and access to a third‑party cardiac tool elevate non-expert performance. I’d point decision-makers to regulatory clearances and standardized image sets reviewed by blinded clinicians; objective archiving and cross‑site audits beat marketing slides. Gaps remain at the far ends of performance—ultra-specialized cart workflows—and we should keep running head‑to‑head reader studies until those lines are bright.

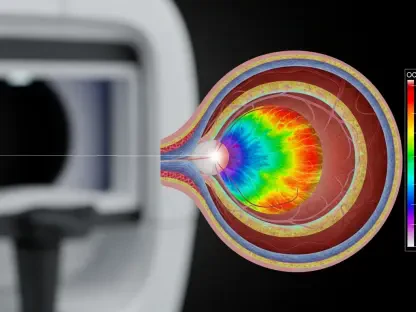

AI guidance can unlock use by non-experts. Which scan types benefit most today, and how do you balance guidance with clinician autonomy? How do you measure AI safety, bias, and drift, and what is your process for post-market surveillance and rapid model updates?

Prenatal biometry and core cardiac windows are clear beneficiaries—hence the FDA-cleared gestational age tool and diagnostic‑quality cardiac support. Guidance should feel like cruise control: easy to engage, easy to override, and constantly reminding you that the clinician is the pilot. We watch safety by correlating AI‑assisted findings with outcomes, monitoring for bias across settings—from major hospital systems to rural clinics—and tracking drift over time. Post‑market, every update ships with audit trails, rollback plans, and targeted education so the bedside experience improves without surprises.

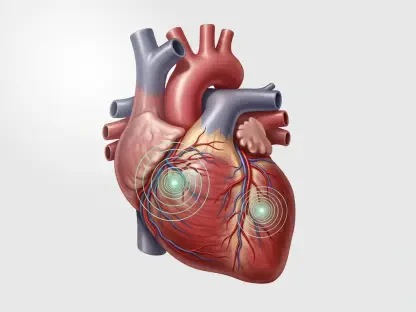

You recently gained FDA clearance for gestational age estimation and support diagnostic-quality cardiac scans. How will you integrate these into prenatal and cardiovascular pathways? What training, supervision, and billing workflows are required, and how will you validate outcomes at scale?

Prenatal pathways start at first contact—community clinics use the gestational age tool to anchor timing, while flagged cases route to higher tiers. Cardiovascular integration means structured point‑of‑care scans feeding into cardiology for confirmation, with the third‑party diagnostic workflow ensuring images meet the bar. Training pairs brief competency checks with on-device tips; supervision escalates anomalous findings to specialists, and billing follows existing POCUS codes where applicable. At scale, we validate by archiving studies, aligning with EHRs and PACS, and comparing outcomes against standard care across thousands of scans, not dozens.

Partnerships with generative AI carry substantial licensing costs. What capabilities do you gain that you couldn’t build in-house, and how do you justify the economics? What milestones will signal ROI, and how will you avoid vendor lock-in or model obsolescence?

A co‑development and licensing deal that includes a $15 million payment plus $10 million annually buys acceleration—language interfaces, image-to-text narratives, and tooling that would take years to replicate. The economics hinge on faster adoption, more scans per device, and reduced documentation burden that frees clinicians to scan rather than type. ROI shows up when usage curves bend upward and support tickets trend down because guidance and reporting “just work.” Avoiding lock‑in means modular APIs, model checkpoints we can validate independently, and the option to swap components without ripping out the whole stack.

The company reports strong revenue yet ongoing net losses. What are the three most important levers to reach profitability—gross margin, subscription attach, or enterprise deals? What concrete targets and timelines are you setting, and how will you phase R&D versus go-to-market spend?

With $97.6 million in revenue and a $77.1 million net loss last year, the levers are clear: keep improving gross margin as volumes scale, attach high‑value subscriptions, and land enterprise deals that standardize workflows across sites. I’d phase R&D to sustain the chip roadmap and core AI like gestational age, while pushing go‑to‑market to deepen hospital, rural, and global health penetration. Targets should be quarter-by-quarter: more devices activated, higher subscription penetration, and rising scans per device sustained beyond month three. When those three lines rise together, profitability shifts from slideware to reality.

Looking ahead, you’ve mentioned new form factors like patches or endoscopic integration. Which clinical problems would those solve first, and what regulatory and reimbursement paths do you anticipate? What technical hurdles—power, heat, coupling—are toughest, and how will you test them?

Patches could watch lungs for evolving fluid or track fetal parameters without constant repositioning; endoscopic integration could bring real-time imaging to procedures that currently rely on feel. Regulatory paths will echo existing ultrasound indications, but human factors and long-wear safety will be front and center for patches. Technically, power density, heat dissipation, and coupling over time are the knottiest problems; a chip that once lived in a handheld now has to run quietly and cool on skin. We’ll test with benchtop phantoms, wear studies, and controlled clinical pilots before broad release.

Beyond image acquisition, how will you standardize reporting, archiving, and interoperability with EHRs and PACS? What’s your plan for credentialing, QA programs, and medico-legal coverage as more clinicians scan? Which metrics will define “diagnostic-grade” performance across sites?

Standardization starts with structured templates that auto-populate from AI hints and push clean summaries into the EHR while archiving full clips to PACS. Credentialing becomes an ongoing journey—initial sign-off, periodic refreshers, and QA that samples studies across shifts and roles. Medico-legal coverage improves when documentation is consistent, peer review is regular, and FDA-cleared tools—like gestational age and cardiac support—are used within defined indications. Across sites, “diagnostic-grade” means reproducible images, correlation with outcomes, and adherence to protocols so a scan in a city ICU looks as reliable as one in a rural clinic.

Do you have any advice for our readers?

Start small, start now, and design for the hand that holds the probe. Pick three use cases, appoint a super-user, and wire archiving and QA from day one. Lean on what’s proven—chip-based probes with 9,000 sensors, FDA-cleared gestational age, and diagnostic-quality cardiac support—and let real-world feedback, not perfectionism, guide the next step. The distance between a three‑hour wait and a two‑minute bedside scan is a deliberate workflow, not a miracle; build that bridge.