Imagine a healthcare system where artificial intelligence (AI) predicts patient outcomes with uncanny accuracy, streamlines administrative burdens, and alleviates clinician burnout overnight. This vision drives innovation, yet a staggering number of health executives—over 80%—acknowledge that while AI holds transformative potential, the path to safe adoption remains fraught with obstacles. This roundup dives into diverse perspectives from industry leaders, consultancy surveys, and health system reports to uncover the complexities of integrating AI into healthcare. The purpose is to synthesize varying opinions and practical tips on navigating this high-stakes terrain, offering a comprehensive look at both the promise and the pitfalls of AI in medical settings.

Exploring the AI Revolution in Healthcare: Diverse Views on Potential

Industry leaders widely recognize AI as a game-changer for healthcare, with many pointing to its capacity to enhance clinical decision-making and operational efficiency. Data from recent surveys indicate that a significant majority of hospital executives see AI as a tool to address systemic challenges like workforce shortages and rising costs. This optimism stems from the belief that AI-driven analytics can reduce manual workloads and improve patient care through precise diagnostics.

However, not all perspectives align on the immediacy of AI’s impact. Some health system administrators caution that while the potential exists, the technology is still in its early stages, requiring substantial refinement before it can be relied upon for critical decisions. This divergence highlights a spectrum of enthusiasm tempered by pragmatic concerns about readiness and scalability in real-world applications.

A recurring theme across discussions is the urgent need for balance between innovation and risk management. Reports from healthcare consultancies emphasize that AI could be a lifeline for an industry under strain, yet the consensus leans toward a measured approach to ensure patient safety isn’t compromised by untested systems. This blend of hope and caution sets the stage for deeper exploration into specific challenges.

Unpacking Barriers to AI Integration: A Spectrum of Concerns

Optimism Tempered by Doubt: Perceptions of AI’s Power

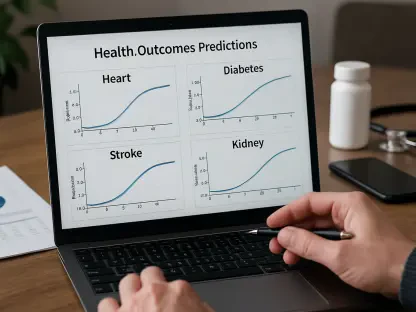

Across multiple industry analyses, there is a strong belief that AI can revolutionize healthcare by supporting clinical decisions with data-driven insights. Over 80% of surveyed executives express confidence in AI’s ability to transform how care is delivered, citing examples like predictive models for patient outcomes. This enthusiasm is fueled by the prospect of reducing errors and enhancing precision in diagnostics.

Yet, beneath this positivity lies a layer of uncertainty shared by many in leadership roles. Observations from health system forums reveal a gap between AI’s theoretical benefits and the practical hurdles of implementation, such as integrating systems into existing workflows. This skepticism underscores a critical need for validation and trust-building before widespread adoption can occur.

Further insights suggest that AI is often seen as a remedy for clinician burnout, with numerous executives pointing to its potential to automate repetitive tasks. However, the challenge remains in ensuring these tools are intuitive and reliable enough to gain acceptance among frontline staff. This duality of promise and doubt shapes much of the current discourse.

Trust Issues: Data Privacy and Algorithm Reliability Concerns

A major sticking point echoed across health industry roundtables is the pervasive concern over data privacy and security. Statistics from recent studies show that nearly 70% of executives view these issues as primary barriers, fearing breaches could undermine patient trust. This apprehension is compounded by real-world hesitations among systems wary of exposing sensitive information to AI platforms.

Algorithmic reliability also draws significant scrutiny, with only a small fraction of leaders—around 12%—expressing confidence in current AI tools for clinical use. Feedback from hospital networks highlights fears of biased outputs leading to incorrect diagnoses, a risk deemed unacceptable in high-stakes environments. These concerns point to an urgent need for robust testing protocols.

Moreover, the potential for patient harm due to flawed AI systems is a recurring worry in industry discussions. Many health leaders advocate for stricter oversight to prevent operational failures, suggesting that without improved governance, adoption will remain limited. This shared focus on risk mitigation reflects a collective call for safer frameworks.

Cautious Progress: Investment Trends and Adoption Pace

Investment in AI is on the rise, with approximately two-thirds of health systems allocating resources to explore its applications, according to aggregated industry reports. However, only a minority—about 10%—adopt an aggressive stance, indicating a preference for gradual implementation. This cautious trend is often attributed to the high consequences of errors in healthcare settings.

Regional differences in adoption speed also emerge in various analyses, with some urban health systems moving faster due to access to resources, while rural counterparts lag behind. Perspectives on this disparity suggest that regulatory clarity could accelerate progress across the board. Such variations underscore the uneven landscape of AI integration.

Contrary to assumptions that slow adoption signals failure, several industry voices argue that a deliberate pace may yield more sustainable results. This viewpoint posits that taking time to refine systems and build trust can prevent costly missteps, offering a counter-narrative to the rush for rapid deployment. It’s a reminder that patience can be a strategic asset.

Ethical Challenges and Governance Demands in AI Use

Ethical considerations frequently surface in discussions about AI in healthcare, with a notable percentage of executives—around 36%—concerned about biased datasets perpetuating inequities. Insights gathered from health policy panels stress that without addressing these biases, AI risks exacerbating existing disparities in care delivery. This issue demands immediate attention to ensure fairness.

Approaches to governance vary widely, as highlighted in comparative studies of health systems. Some organizations prioritize continuous monitoring of AI outputs, while others focus on strategic planning to align technology with ethical standards. Expert opinions gathered from industry webinars advocate for a hybrid model that combines both tactics to safeguard against unintended consequences.

Looking ahead, evolving standards could reshape how AI is deployed, according to speculative analyses from healthcare forums. The question remains how these frameworks will balance innovation with accountability, a topic that continues to spark debate. This ongoing conversation emphasizes the importance of responsible development in maintaining public trust.

Practical Strategies for Safe AI Adoption: Collective Wisdom

Synthesizing insights from multiple sources, the balance between AI’s transformative potential and its inherent risks stands out as a central theme. Health executives are encouraged to prioritize robust governance frameworks that include regular audits and transparent reporting to address data security concerns. This step is seen as foundational to building confidence in AI tools.

Another widely recommended strategy involves investing in high-quality data to minimize biases and enhance algorithm reliability. Contributions from industry workshops suggest starting with low-risk applications, such as administrative automation, before scaling to clinical uses. This phased approach allows for iterative improvements based on real-world feedback.

Collaboration across sectors also emerges as a key tip from health technology summits, with many advocating for partnerships between hospitals, tech developers, and regulators. Such alliances can foster shared learning and accelerate the development of best practices. These actionable steps provide a roadmap for leaders navigating this complex landscape.

Reflecting on the Path Traveled: Next Steps for AI in Healthcare

Looking back, the discourse surrounding AI adoption in healthcare reveals a profound tension between innovation and caution, as industry leaders grapple with both the technology’s promise and its challenges. The collective insights point to significant barriers like data privacy and trust in algorithms, which slow progress despite widespread optimism.

Moving forward, health executives are advised to focus on small, manageable pilot projects to test AI capabilities in controlled environments, thereby reducing risks. Establishing cross-industry task forces to develop unified ethical guidelines is also seen as a critical next step to ensure consistency and accountability.

Additionally, investing in training for staff to interact with AI systems emerges as an essential consideration to bridge the gap between technology and human expertise. These actionable measures, drawn from the roundup of perspectives, offer a clear direction for harnessing AI’s potential while safeguarding patient welfare in the evolving healthcare landscape.